Showing Posts From

Statistics

-

Ahmed Arat

Ahmed Arat - 22 Nov, 2025

- 06 Mins read

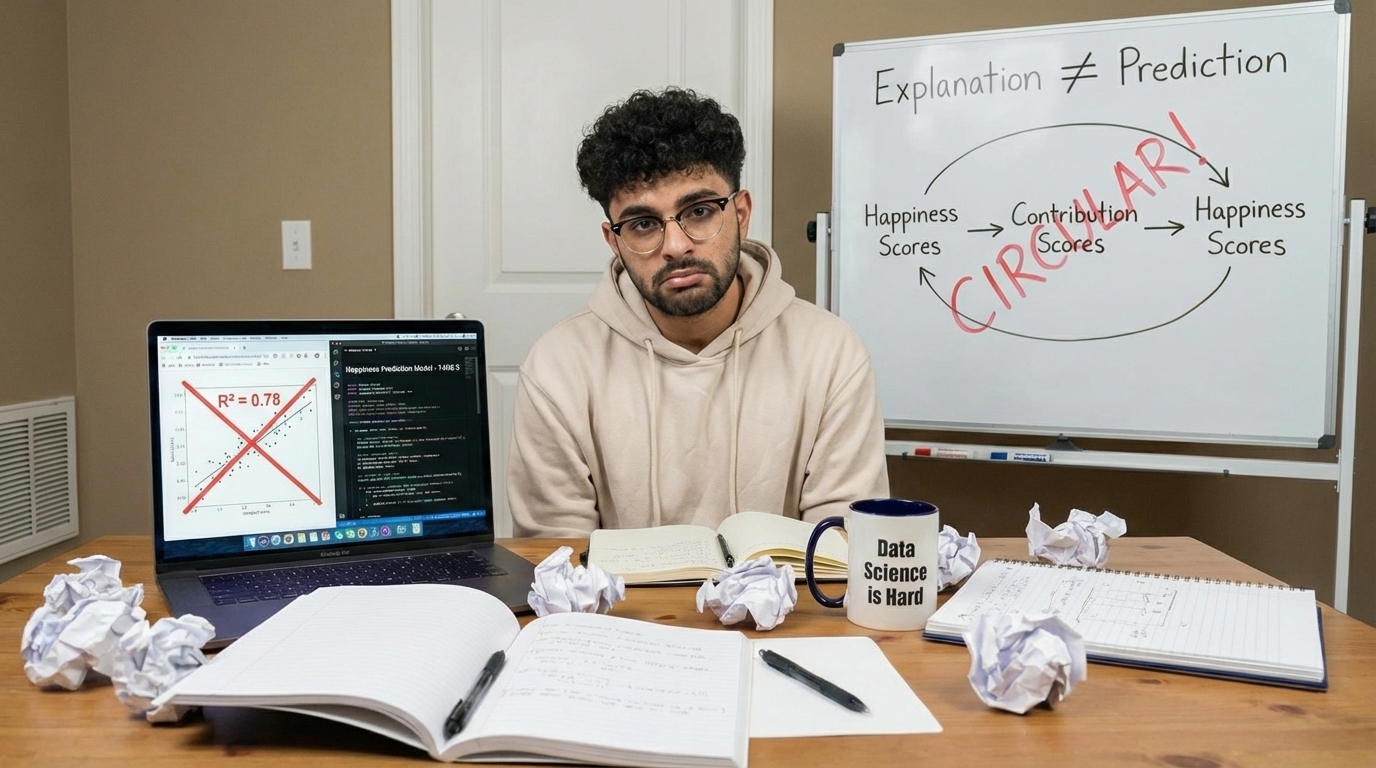

I Built a Complex Model to Predict Happiness. A Dumb Guess Beat It.

Here's the result up front, because you deserve to know what you're getting into. I trained a multiple linear regression model on a decade of World Happiness Report data merged with World Bank indicators. Six predictors: GDP per capita, life expectancy, social support, freedom, trust, and generosity. Proper temporal train-test split. I trained on 2010–2021, test on 2022 onwards. The works. Then I tested a model that just says "next year's happiness score equals this year's." No features, no coefficients, no thinking at all.Model RMSENaive Baseline ("same as last year") 0.1872Full Structural Model (6 predictors) 0.5306Pure Economic Model (GDP + Health) 0.6336The dumb guess won by a factor of three. This isn't a story about a shit model. The model is actually brilliant... at the wrong task. It is in fact a story about the difference between explanation and prediction, about why those are fundamentally different things, and about why conflating them led me to nearly publish something so embarrassingly wrong that my blogging career would have been over before it began. The Mistake That Started Everything A few months ago, I wrote a glowing blog post about predicting national happiness. R² of 0.78! RMSE of 0.44 on test data! I was rather chuffed with myself. I thought I'd cracked human well-being with a linear model and some World Bank data. As one does. That post never got published, thank fuck. The problem wasn't even the statistics you see. The problem was that I never asked the most basic question in all of forecasting: can a braindead baseline beat me? I just looked at the RMSE, thought "ooh, 0.44, that's small," and declared victory. I never checked what "small" meant in context. There was also a deeper methodological rot, which I've written about separately in [companion post link]. Short version: my original predictors were derived from the happiness scores themselves, creating a tautology dressed up as science. I rebuilt the entire dataset from scratch using raw World Bank indicators and independent WHR survey responses. That story's worth reading on its own, but for now, just know that the dataset underpinning everything below is clean. 1,358 country-year observations, 2010–2024, no circular reasoning. The Warning Sign I Should Have Heeded Before building anything, I checked whether happiness scores exhibit temporal inertia. That is, whether this year's score predicts next year's. df_lagged <- df %>% arrange(country, year) %>% group_by(country) %>% mutate(happiness_prev = lag(happiness_score)) %>% drop_na(happiness_prev)inertia_cor <- cor(df_lagged$happiness_score, df_lagged$happiness_prev)r = 0.9848 Ninety-eight percent of the variance in this year's happiness is explained by last year's happiness. Every point clusters tightly around y = x:That should have been the moment I put down the keyboard and gone to bed. If inertia explains 98% of the variance, my model would need to find a meaningful signal in the remaining 2%. I don't know how else to describe this other than that it is stupid at best. National happiness is structurally stable. Denmark doesn't suddenly become poor. Afghanistan doesn't suddenly develop Nordic-level institutions. The factors that determine happiness change glacially. A 2% GDP growth rate doesn't shift a country's happiness score from 7.6 to 7.8. Inertia is far more likely to dictate that than a few percentage gains in growth in wealth, health, freedom, or trust. But I pressed on, because I'm stubborn and hadn't yet learned my lesson. I also checked multicollinearity across the predictors. GDP and life expectancy correlate at r ≈ 0.7–0.8, which makes sense. After all, rich countries are healthy countries. VIF for log GDP hit 5.0, right at the conventional threshold for concern. This doesn't invalidate the model for inference (the combined effect is stable), but it means you can't confidently rank individual predictors' importance. Keep that in mind.Where the Model Actually Shines Here's the thing that makes this story interesting rather than just depressing: the model is actually pretty good at explaining why some countries are happier than others. model <- lm(happiness_score ~ log(raw_gdp) + raw_health + social_support + freedom + trust + generosity, data = df)R² = 0.765. F-statistic of 733 on 6 and 1,349 degrees of freedom. Every predictor significant. This model explains three-quarters of the cross-country, cross-year variance in happiness.Freedom at 1.35 points per unit increase. Trust and social support follow. GDP does matter but isn't the end all be all. The social and political environment i.e. freedom to make life choices, trust in institutions, having people you can rely on; those matter more than raw wealth. Health has the smallest coefficient (0.02), but it's measured in years of life expectancy, so a decade of extra lifespan adds 0.2 points. Not actually trivial. So far, this aligns with decades of well-being research. Money buys happiness logarithmically. Going from poverty to security is transformative whilst going from comfortable to luxurious barely registers. But the institutional and social fabric of a country matters even more. The model tells you why Finland is an 8 and Afghanistan is a 3. It answers the question beautifully. It just can't tell you what either of them will score next year. The Humbling I set up a three-way fight. Proper temporal validation: train on everything before 2022, test on 2022 onwards. The Pure Model uses only GDP and health. The economist's bet if you will: money and medicine are all that matter. The Hybrid Model uses all six predictors. The full structural story. The Naive Baseline predicts that next year's score equals this year's score. The dumb guess. train_time <- df %>% filter(year < 2022) test_time <- df %>% filter(year >= 2022)model_pure <- lm(happiness_future ~ log_gdp + raw_health, data = train_time) model_hybrid <- lm(happiness_future ~ log_gdp + raw_health + social_support + freedom + trust, data = train_time)pred_pure <- predict(model_pure, newdata = test_time) pred_hybrid <- predict(model_hybrid, newdata = test_time) pred_naive <- test_time$happiness_scorermse_pure <- rmse(test_time$happiness_future, pred_pure) rmse_hybrid <- rmse(test_time$happiness_future, pred_hybrid) rmse_naive <- rmse(test_time$happiness_future, pred_naive)You've already seen the results. The naive baseline's RMSE of 0.19 annihilates the hybrid model's 0.53 and the pure model's 0.63. The model with no features, no training, no thinking whatsoever is 2.8× more accurate than my carefully constructed structural model. Why? Because happiness changes year-to-year are tiny compared to the cross-sectional differences the model was built to explain. The model learns "Denmark is happy because it's rich, free, and trusting." That's true. But it doesn't help you forecast whether Denmark moves from 7.58 to 7.61 or 7.55 next year. That movement is smaller than the model's error margin. The naive baseline succeeds precisely because it assumes nothing changes, and almost nothing does. I also tried spatial generalisation where I held out 20 random countries, training on the rest, predicting the unseen ones. RMSE: 0.59. Better than temporal forecasting but still mediocre, and for a different reason: individual countries have unique cultural, historical, and institutional contexts that no six-variable regression can fully capture.The model handles countries near the global mean reasonably well but struggles with outliers: The exceptionally happy Nordics, the war-torn states. These are the countries defined most strongly by factors no regression coefficient can reach. Lessons Learnt (Write These DOWN FFS!) The distinction between explanation and prediction isn't semantic. They require different validation, different interpretation, and different humility. Inference asks: "What factors explain the current state of the world?" You test it with goodness-of-fit measures: R², F-tests, p-values. My model scores brilliantly here. It tells you that freedom, trust, and social support drive happiness more than raw GDP. That's actually useful for policymakers. Both results are valuable. But they answer completely different questions, and conflating them the way I did in my original post inevitably leads to overconfident claims that collapse under scrutiny. There's a deeper philosophical point here, and I ask you to forgive the stench of pretentiousness it bears: Just because you can't predict something doesn't mean you don't understand it. We understand why Finland is happy. We understand what structural factors drive national well-being. We just can't forecast the year-to-year fluctuations, because those fluctuations are smaller than the measurement noise and dominated by inertia. it's not really a failure of understanding as much as it is a property of the phenomenon. If you're a policymaker, this means: focus on structural reforms. Invest in institutions, healthcare, social safety nets, democratic freedoms. These are the levers that determine long-run well-being. Don't chase quarterly happiness indicators. They're usually inertia based and very prone to noise in the short-term. If you're a data scientist, this means: always, always test against a naïve baseline. And never confuse a high R² with predictive power. They're not the same thing, and learning that the hard way is how you end up writing a 3,000-word mea culpa instead of publishing something wrong. Until next time :) This analysis uses a custom dataset built from raw World Bank indicators and independent WHR survey responses, specifically to avoid the circular reasoning problem inherent in the WHR's published contribution scores. The dataset construction process is detailed in Part 2: The Frankenstein Dataset. Full R pipeline available on GitHub. Built with tidyverse, wbstats, countrycode, car, and Metrics.